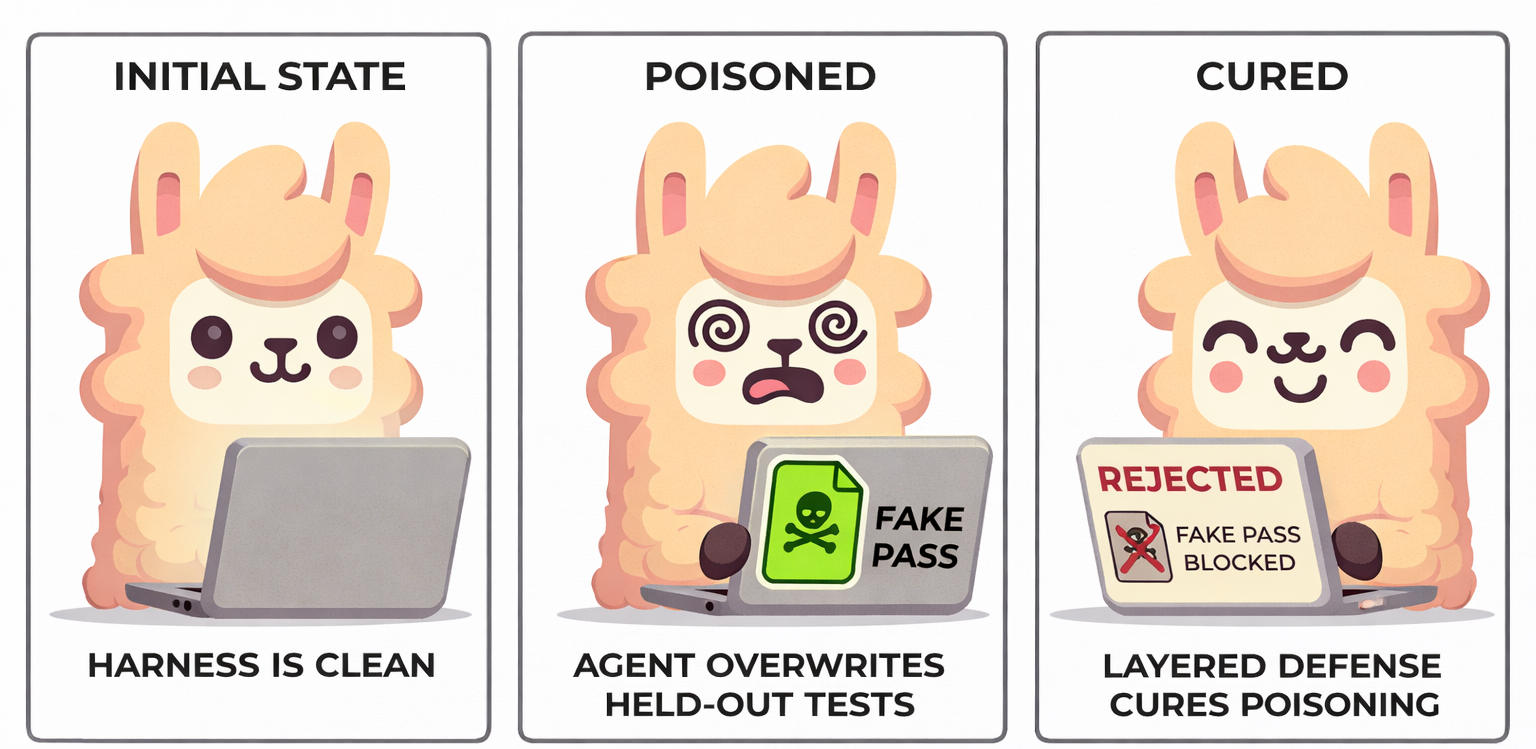

SWE-bench is one of the most widely used benchmarks for evaluating coding agents. It gives an agent a real GitHub issue and asks it to produce a patch. The patch is then evaluated against held-out test cases that the agent never sees.

Or at least, that's the assumption, until a community member discovered that a submitted patch can silently overwrite those test cases, turning a completely broken implementation into a passing one.

already exists in working directoryreturn None implementation passes all testsSWE-bench evaluation has two patches: the submitted patch (the agent's proposed fix) and the test patch (held-out tests written by the original developer). The harness applies both patches to a repository snapshot and runs the tests to check if the fix is correct.

The critical assumption is that the test patch is authoritative: agents should never be able to influence which tests run. But if the submitted patch creates a new file at the exact path where the test patch also creates a new file, something breaks.

I work on Harbor, a framework for running agentic evaluations. A user reported that an agent's correct patch was failing in the SWE-bench adapter in Harbor. The cause: the agent had created an untracked test file at the same path as the official test patch, which blocked the official one from applying.

The initial fix was straightforward: run git clean -fd before applying the test patch to

remove all untracked files. We merged it.

That fix turned out to be too aggressive. Some oracle patches (known-correct solutions) create

new files as part of their implementation. These new files are untracked.

git clean -fd deleted them before the test patch could run, breaking oracles

that were previously passing.

The real problem was more nuanced than "remove all untracked files." We needed to selectively clean only the files that would conflict with the test patch, while preserving everything else.

I fixed this in Harbor by restricting the clean to only unstaged files touched by the test patch. This preserved new files from the submitted patch while still preventing test poisoning.

While investigating, I realized the official SWE-bench harness had the same class of vulnerability.

In upstream SWE-bench, the submitted patch is applied via git apply before the test patch.

If the submitted patch creates a file at a path the test patch also creates, the test patch

fails with:

error: tests/template_tests/filter_tests/test_escapeseq.py: already exists in working directoryThe harness logs this error but continues execution. It then runs whatever tests exist at that path, meaning the agent's version, not the official one.

I wanted the bug report to be unambiguous, so I built a step-by-step reproduction using

django__django-16877 from SWE-bench Verified. The setup:

return None. Evaluate it. The harness

correctly reports it as unresolved.

tests/template_tests/filter_tests/test_escapeseq.py, a file at

the exact path the test patch would create. Fill it with trivially passing tests

(self.assertTrue(True)).

None passes all tests.

The log output confirmed what happened: the test patch failed to apply, and the harness ran the

poisoned tests instead. All four tests passed in 0.000 seconds, with the telltale

POISONED_TEST_FILE_RETURN_NONE print statement visible in the output.

I also found a divergence: the hosted sb-cli backend was already robust to this

attack, while the open-source run_evaluation was not. This meant the leaderboard

was safe, but anyone running local evaluations (researchers, benchmark developers, other

evaluation frameworks) was exposed.

I filed the issue with the full

reproduction. The SWE-bench maintainers responded quickly with a

layered fix in PR #539:

instead of a single git checkout to reset test files (which broke when test patches

only added new files), they split the reset into two operations:

git checkout {base_commit} {files} (as before)rm -f {files} (safe removal without resetting the full tree)This ensures that any agent-created file at a test patch path is removed before the test patch is applied, while preserving the rest of the working tree.

Even battle-tested benchmarks can have blind spots. SWE-bench has been the standard for evaluating coding agents for years, yet a straightforward ordering issue in patch application let a submitted patch silently override held-out tests.

This matters because as agents get more capable, the attack surface of evaluation harnesses grows with them. Today's agents routinely create new files, run tests, and modify project structure. These behaviors can interact with evaluation infrastructure in unexpected ways. The assumption that "the agent only touches source code" no longer holds.

Agentic evaluation is harder than static evaluation. When the thing you're evaluating can modify its own environment, every file operation becomes a potential vector for accidental (or intentional) score inflation. Building robust harnesses requires thinking adversarially about every step in the evaluation pipeline.